Last CES was A time of reckoning for lidar companies, many of which became crater-like due to the lack of demand from a (yet) non-existent autonomous vehicle industry. The few who have distinguished themselves by specialization have moved beyond lidar this year. New acquisition and imaging methods have made it their business to compete with and complement laser-based technology.

Lidar pushed traditional cameras forward because they could do things they couldn’t – and now some companies are pushing to do the same with slightly less exotic technologies.

A good example of the different solution to the problem or perception is the Vehicle-to-X tracking platform from Eye Net. This is one of those technologies that has been talked about in the context of 5G (still a little exotic, admittedly) which, with all the hype, really enables short-range, low-latency applications that could be lifesaving.

Eye Net provides collision alerts between vehicles equipped with its technology, whether or not they have cameras or other sensor technology. The example they provide is a car driving through a parking lot without knowing that a person on one of those terribly unsafe electric scooters is moving perpendicular to it to zoom in on its path, but is completely obscured by parked cars. Eye Net’s sensors detect the position of the devices on both vehicles and send warnings in good time so that one or both of them can brake.

Credit: Eye network

They’re not the only ones trying something like this, but they hope that by providing some sort of white label solution, a good sized network can be built relatively easily, rather than not having one, and then all VWs are and still are equipped some more Fords and some e-bikes and so on.

But the vision will still be an integral part of vehicle navigation, and progress is being made on several fronts.

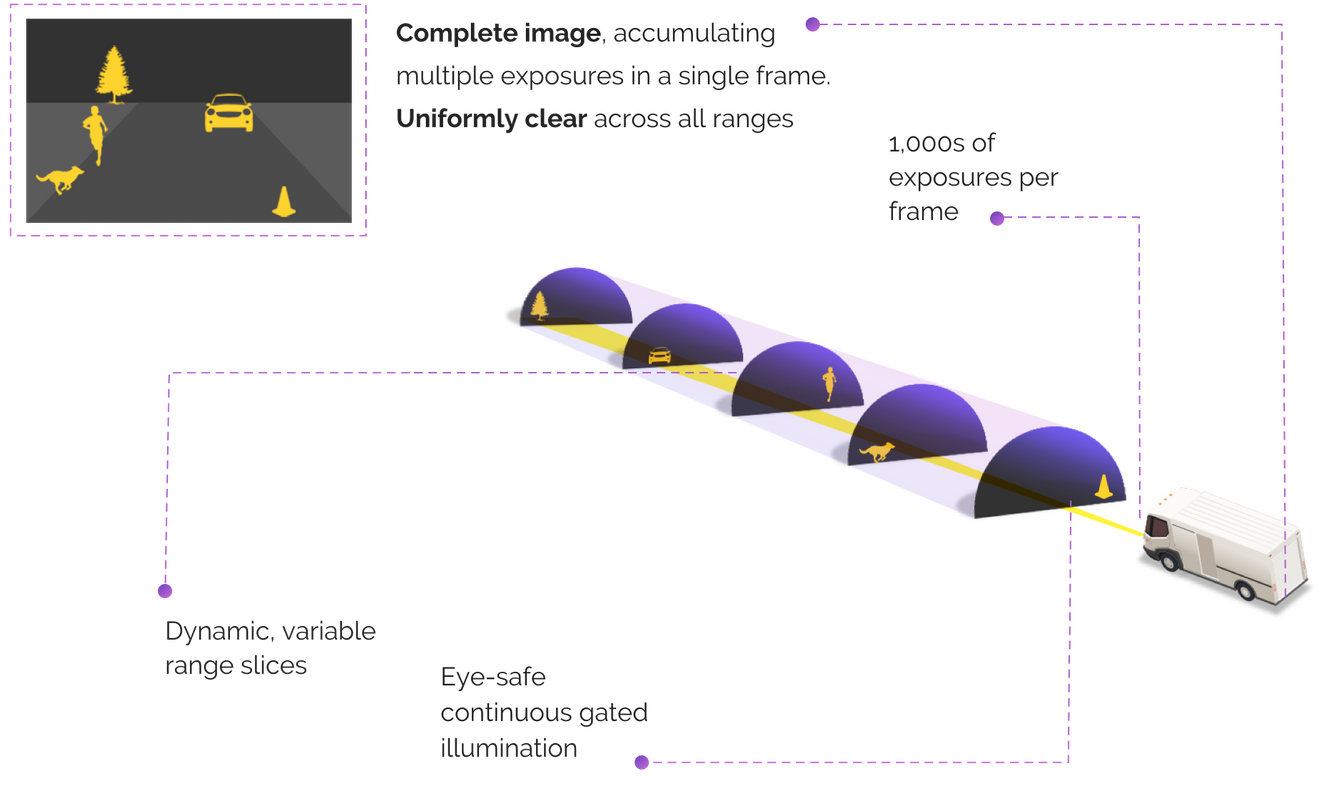

Brightway Vision, for example, solves the problem of normal RGB cameras with limited visibility under many real-world conditions by working multispectrally. In addition to the usual visible-light images, the company’s camera is connected to a near-infrared beamer that scans the road many times per second at set distance intervals.

Credit: Brightway vision

The idea is that if the main camera cannot see 100 feet away due to fog, the NIR images will still capture obstacles or road features as they scan that “slice” of its regular course of the incoming area. It combines the advantages of traditional cameras with those of IR cameras, but manages to avoid the shortcomings of both. The pitch is that there is no need to use a regular camera when you can use one of these. This does the same job better, and may even allow another sensor to be cut out.

Foresight Automotive also uses multispectral imagery in its cameras (probably in a few years, hardly any vehicle camera will be limited to the visible spectrum) that are thermally immersed through a partnership with FLIR, but what it really sells is something else.

Multiple cameras are generally required for 360 degree coverage (or tight coverage). But where these cameras go is different in a compact sedan from an SUV from the same manufacturer – let alone in an autonomous cargo vehicle. Since these cameras have to work together, they have to be perfectly calibrated and know the exact location of each other so that they know, for example, that both are looking at the same tree or cyclist and not two identical ones.

Credit: Foresight Automotive

The advancement of Foresight is to simplify the calibration phase so that a manufacturer, designer, or test platform does not have to be painstakingly retested and certified every time the cameras have to be moved half an inch in one direction or the other. The Foresight demo shows how they glue the cameras to the roof of the car seconds before driving.

It has parallels to another startup called Nodar, which is also based on stereoscopic cameras but takes a different approach. The technique of inferring depth from binocular triangulation, as the company points out, goes back decades or millions of years if you count our own vision system that works in a similar way. The limitation that has held this approach back is not that optical cameras fundamentally cannot provide the depth information needed by an autonomous vehicle, but that they cannot be trusted to remain calibrated.

Nodar shows that the paired stereo cameras don’t even need to be mounted to the bulk of the car, which would reduce jitter and minor mismatches between the cameras’ views. The “hammerhead” camera setup attached to the rearview mirrors has a wide stance (like that of the shark) which provides improved accuracy due to the greater differences between cameras. Because the distance is determined by the differences between the two images, it doesn’t take object recognition or complex machine learning to say, “This is a shape, probably a car, probably about that big, which means it’s probably that way is far away. ” could be with a single camera solution.

Credit: Nodar

“The industry has already shown that camera arrays work just as well as human eyes in harsh weather conditions, ”said Nodar COO and co-founder Brad Rosen. “For example, Daimler engineers have published results that show that current stereoscopic approaches provide much more stable depth estimates than monocular methods and LiDAR completion in bad weather. The nice thing about our approach is that the hardware we use today is automotive grade and with many choices for manufacturers and dealers alike. “

Indeed, the cost of the device was a huge blow to lidar – even “cheap” devices are usually orders of magnitude more expensive than ordinary cameras, which adds up very quickly. But Team Lidar doesn’t stand still either.

Sense Photonics came to the stage with a new approach that seemed to combine the best of both worlds: a relatively cheap and simple flash lidar (as opposed to rotating or scanning, which adds complexity) combined with a traditional camera so that both look at versions of the same image so they can work together to identify objects and set distances.

Since debuting in 2019, Sense has advanced its technology for production and beyond. The latest advancement is the custom hardware, with which objects can be imaged up to a distance of 200 meters – generally for both lidar and conventional cameras.

“In the past we purchased a commercially available detector for coupling with our laser source (Sense Illuminator). However, our 2 year in-house detector development has come to an end and is a huge achievement that enables us to build short and long range automotive products, ”said CEO Shauna McIntyre.

“Sense created ‘building blocks’ for a camera-like LiDAR design that can be combined with different sets of optics to achieve different fields of view, distances, resolutions, etc.,” she continued. “And in a very simple design that can actually be produced in large quantities. You can think of our architecture as a DSLR camera where you have the ‘base camera’ and you can pair it with a macro lens, zoom lens, fisheye lens, etc. to accomplish various functions. “

One thing that all companies seemed to agree on is that no single collection modality will dominate the industry top to bottom. Aside from the fact that the requirements of a fully autonomous vehicle (i.e., a level 4-5 vehicle) are very different from those of a driver assistance system, the field is moving too fast for an approach to stay on top for long.

“AV companies cannot succeed if the public is not convinced that their platform is secure and only increases safety margins with redundant sensor modalities at different wavelengths,” said McIntyre.

Whether that means visible light, near infrared, thermal imaging, radar, lidar or, as we’ve seen here, a combination of two or three of them, it is clear that the market will continue to prefer differentiation – albeit as with the boom – The bust cycle observed in the lidar industry a few years ago also warns that consolidation is not lagging far behind.

Comments are closed.